All Categories

Featured

Table of Contents

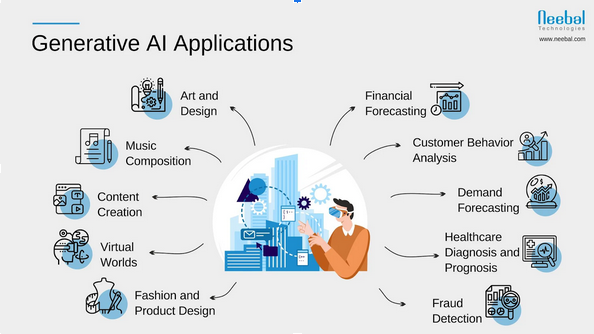

Generative AI has organization applications beyond those covered by discriminative designs. Let's see what general models there are to use for a vast array of troubles that obtain outstanding results. Numerous algorithms and relevant models have actually been developed and educated to develop new, sensible material from existing information. Several of the models, each with distinctive systems and capacities, go to the center of advancements in areas such as picture generation, message translation, and information synthesis.

A generative adversarial network or GAN is an artificial intelligence structure that places the two semantic networks generator and discriminator versus each various other, thus the "adversarial" component. The competition between them is a zero-sum game, where one representative's gain is another representative's loss. GANs were developed by Jan Goodfellow and his coworkers at the University of Montreal in 2014.

Both a generator and a discriminator are typically implemented as CNNs (Convolutional Neural Networks), especially when working with photos. The adversarial nature of GANs lies in a game theoretic circumstance in which the generator network should contend versus the adversary.

Robotics Process Automation

Its foe, the discriminator network, attempts to distinguish between examples attracted from the training data and those drawn from the generator - Human-AI collaboration. GANs will certainly be thought about successful when a generator develops a phony example that is so persuading that it can fool a discriminator and human beings.

Repeat. Very first explained in a 2017 Google paper, the transformer architecture is a maker discovering structure that is extremely efficient for NLP natural language processing jobs. It learns to locate patterns in sequential information like written text or spoken language. Based on the context, the design can forecast the next element of the collection, as an example, the next word in a sentence.

What Are The Limitations Of Current Ai Systems?

A vector represents the semantic qualities of a word, with similar words having vectors that are close in worth. For instance, words crown could be represented by the vector [ 3,103,35], while apple can be [6,7,17], and pear might appear like [6.5,6,18] Of program, these vectors are just illustratory; the genuine ones have much more dimensions.

So, at this stage, details concerning the placement of each token within a series is included in the kind of an additional vector, which is summarized with an input embedding. The outcome is a vector mirroring the word's first definition and position in the sentence. It's after that fed to the transformer neural network, which includes 2 blocks.

Mathematically, the relationships between words in an expression look like ranges and angles between vectors in a multidimensional vector space. This mechanism is able to detect refined ways even far-off data components in a collection impact and rely on each other. In the sentences I poured water from the bottle right into the mug until it was full and I put water from the pitcher right into the cup up until it was empty, a self-attention device can distinguish the meaning of it: In the former situation, the pronoun refers to the cup, in the last to the bottle.

is utilized at the end to calculate the chance of various outcomes and choose one of the most possible option. Then the created output is added to the input, and the whole procedure repeats itself. The diffusion model is a generative version that produces new information, such as images or noises, by imitating the information on which it was trained

Consider the diffusion design as an artist-restorer that researched paintings by old masters and currently can paint their canvases in the very same style. The diffusion version does about the exact same thing in 3 major stages.gradually introduces noise into the initial image until the result is just a chaotic set of pixels.

If we go back to our example of the artist-restorer, direct diffusion is taken care of by time, covering the painting with a network of fractures, dust, and oil; occasionally, the paint is remodelled, including particular information and getting rid of others. is like studying a painting to grasp the old master's initial intent. What is the role of AI in finance?. The design carefully assesses exactly how the added sound modifies the data

Ai In Logistics

This understanding allows the design to properly reverse the process later. After finding out, this design can reconstruct the distorted data by means of the procedure called. It begins from a noise sample and removes the blurs action by stepthe very same means our artist does away with pollutants and later paint layering.

Consider unexposed representations as the DNA of an organism. DNA holds the core guidelines required to develop and preserve a living being. Hidden depictions consist of the basic aspects of information, allowing the version to restore the original details from this encoded essence. If you alter the DNA molecule just a little bit, you obtain an entirely various organism.

Ai-driven Innovation

Say, the woman in the 2nd leading right image looks a little bit like Beyonc yet, at the very same time, we can see that it's not the pop singer. As the name recommends, generative AI changes one kind of photo into another. There is an array of image-to-image translation variations. This job involves extracting the style from a renowned paint and using it to another image.

The result of making use of Secure Diffusion on The results of all these programs are pretty similar. Nevertheless, some customers note that, typically, Midjourney draws a little bit a lot more expressively, and Steady Diffusion complies with the request more plainly at default setups. Researchers have additionally utilized GANs to create synthesized speech from message input.

How Do Autonomous Vehicles Use Ai?

The major job is to carry out audio evaluation and produce "vibrant" soundtracks that can alter depending on exactly how users interact with them. That said, the music may alter according to the atmosphere of the game scene or relying on the strength of the individual's workout in the health club. Review our article on discover more.

Logically, videos can additionally be produced and converted in much the exact same method as photos. While 2023 was noted by innovations in LLMs and a boom in photo generation innovations, 2024 has actually seen significant developments in video generation. At the beginning of 2024, OpenAI introduced a really impressive text-to-video model called Sora. Sora is a diffusion-based model that generates video clip from static sound.

NVIDIA's Interactive AI Rendered Virtual WorldSuch synthetically created data can help establish self-driving autos as they can utilize produced online globe training datasets for pedestrian detection. Of training course, generative AI is no exception.

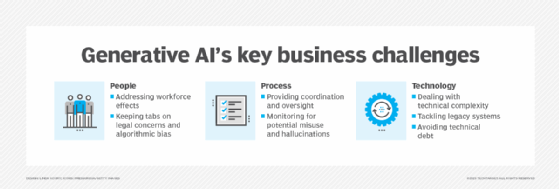

When we state this, we do not mean that tomorrow, devices will increase versus mankind and ruin the globe. Let's be sincere, we're pretty good at it ourselves. Nevertheless, considering that generative AI can self-learn, its habits is difficult to control. The results provided can usually be far from what you expect.

That's why so lots of are applying dynamic and smart conversational AI versions that customers can interact with through message or speech. In addition to consumer solution, AI chatbots can supplement advertising and marketing initiatives and support internal communications.

How Does Ai Create Art?

That's why many are carrying out dynamic and intelligent conversational AI versions that customers can engage with through text or speech. GenAI powers chatbots by comprehending and producing human-like text reactions. In addition to customer care, AI chatbots can supplement advertising and marketing initiatives and assistance interior communications. They can also be integrated right into web sites, messaging applications, or voice assistants.

Latest Posts

What Is The Difference Between Ai And Ml?

Ai For Media And News

What Are The Top Ai Languages?